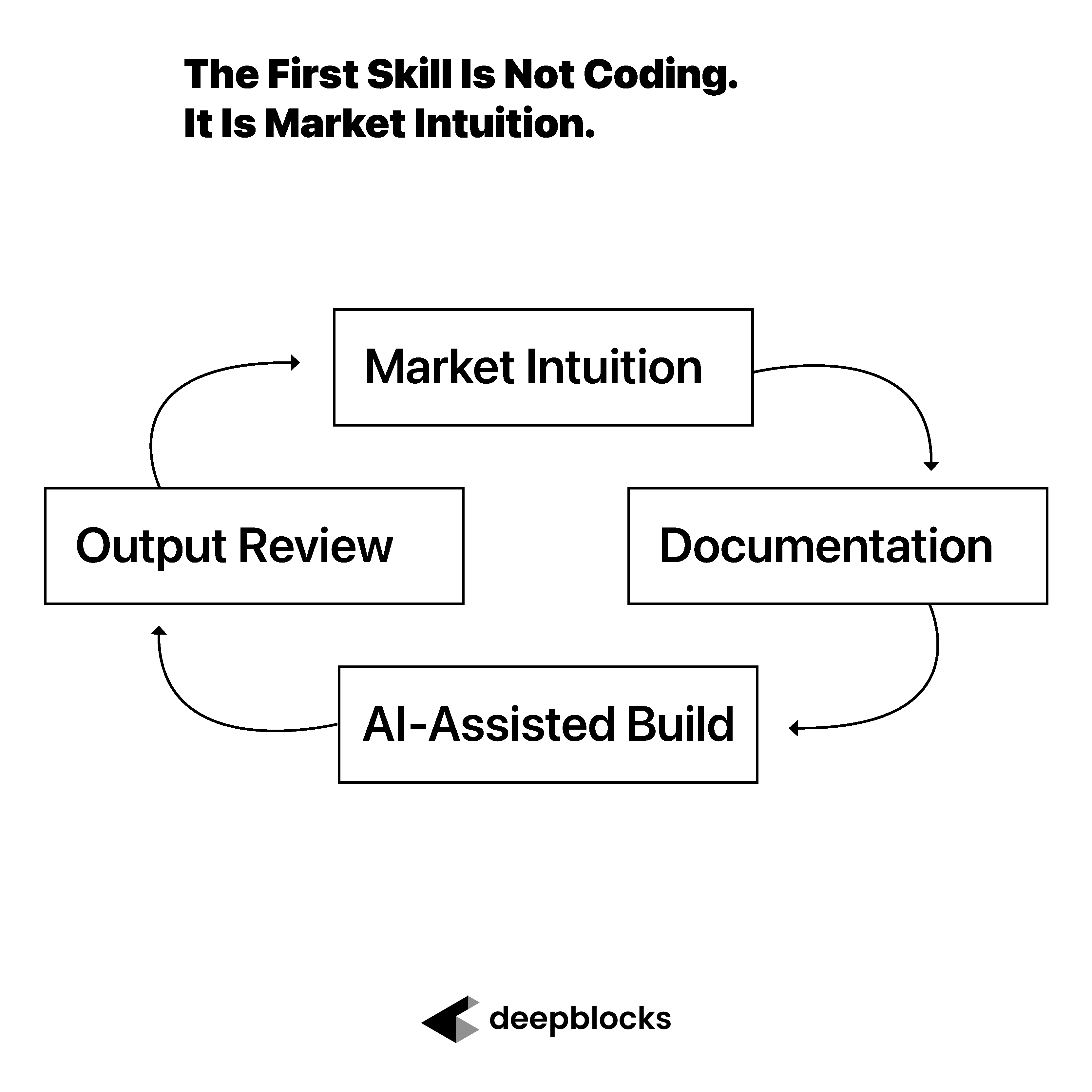

The First Skill Is Not Coding

When industry experts ask me what matters most when building with AI, I tell them two things.

Impeccable documentation.

And intuition.

Not vague intuition.

Market intuition.

That distinction matters because AI has made the act of building easier, but it has not made judgment easier to fake. A real estate expert using AI to build a tool may not need to write every line of code, but they still need to know what the system is supposed to do, what the output should look like, and when something feels wrong.

That is the first real skill.

Not coding.

Knowing what “good” looks like.

Why Documentation Matters When Building With AI

Before market intuition can improve an AI workflow, the expert has to document the workflow clearly.

Documentation is how expertise becomes instruction.

If the expert cannot explain the process, the AI cannot reliably help build it. This is true whether the goal is to create a lead generation workflow, a zoning analysis tool, a feasibility calculator, a market research assistant, or an internal operations dashboard.

Good documentation should describe the steps in the workflow, the inputs required, the assumptions being made, the edge cases to watch for, and the definition of a successful output.

In real estate, this is especially important because the industry is full of assumptions that professionals often carry in their heads.

What counts as a viable parcel?

Which zoning rules matter most?

When is a land use label misleading?

Which rent assumptions are realistic?

What makes a development yield believable?

Which properties should be excluded even if they technically match the filter?

AI cannot guess those standards reliably without guidance.

The expert has to document them.

Market Intuition Is the Quality-Control Layer

Documentation helps AI understand what to build.

Market intuition helps the expert know whether the result is right.

Market intuition is the ability to look at an output and know something is wrong before you can fully explain why.

That may sound subjective, but it is not random. It comes from pattern recognition built over time. It comes from seeing hundreds or thousands of properties, deals, assumptions, markets, site plans, financial models, and data outputs.

A real estate expert may look at a feasibility result and immediately feel that the yield is too high.

They may look at a site and know the parking assumption is unrealistic.

They may see a rent number and know it does not match the submarket.

They may look at a lead list and know the properties are technically eligible but commercially useless.

That kind of intuition is hard to automate.

And it becomes even more important when AI is generating the first version of a workflow.

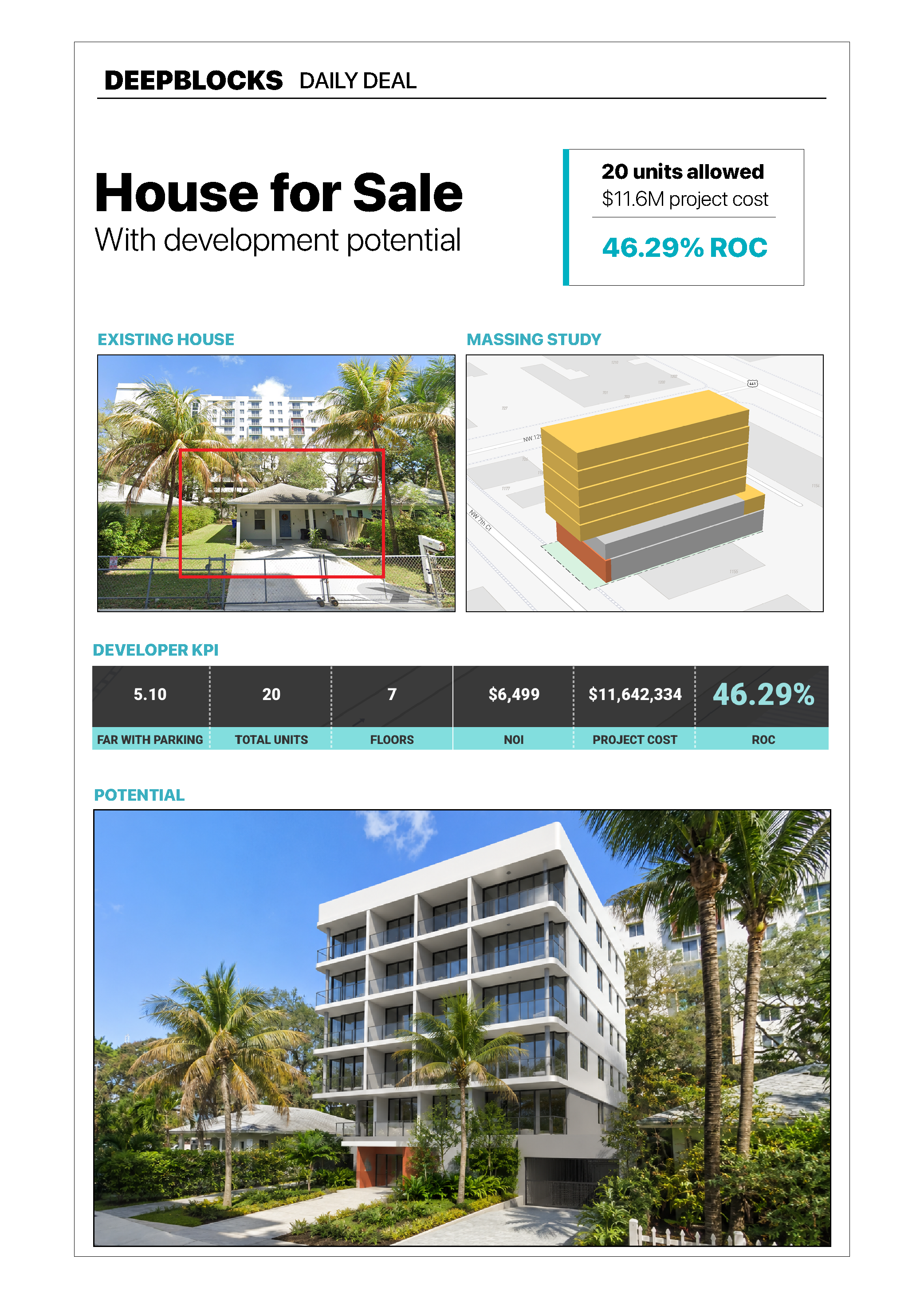

Real Estate Data Is Never as Clean as It Looks

I learned this through real estate data.

At Deepblocks, we have run hundreds of lead generation and property analysis pipelines. Many of these workflows depend on rules that sound simple at first.

Find parcels with certain characteristics.

Classify land uses.

Compare zoning.

Estimate what could be built.

Prioritize the best opportunities.

On paper, that sounds straightforward.

In practice, real estate data is never that clean.

A parcel may have the right size but the wrong access.

A property may have the right zoning but the wrong market conditions.

A dataset may use the same land use category across multiple counties, but the category may not mean the same thing everywhere.

A site may look viable in the data and fail once local rules, parking, setbacks, entitlement risk, or market demand are considered.

This is why AI-generated real estate analysis needs expert review.

The system may run correctly.

The output may still be wrong.

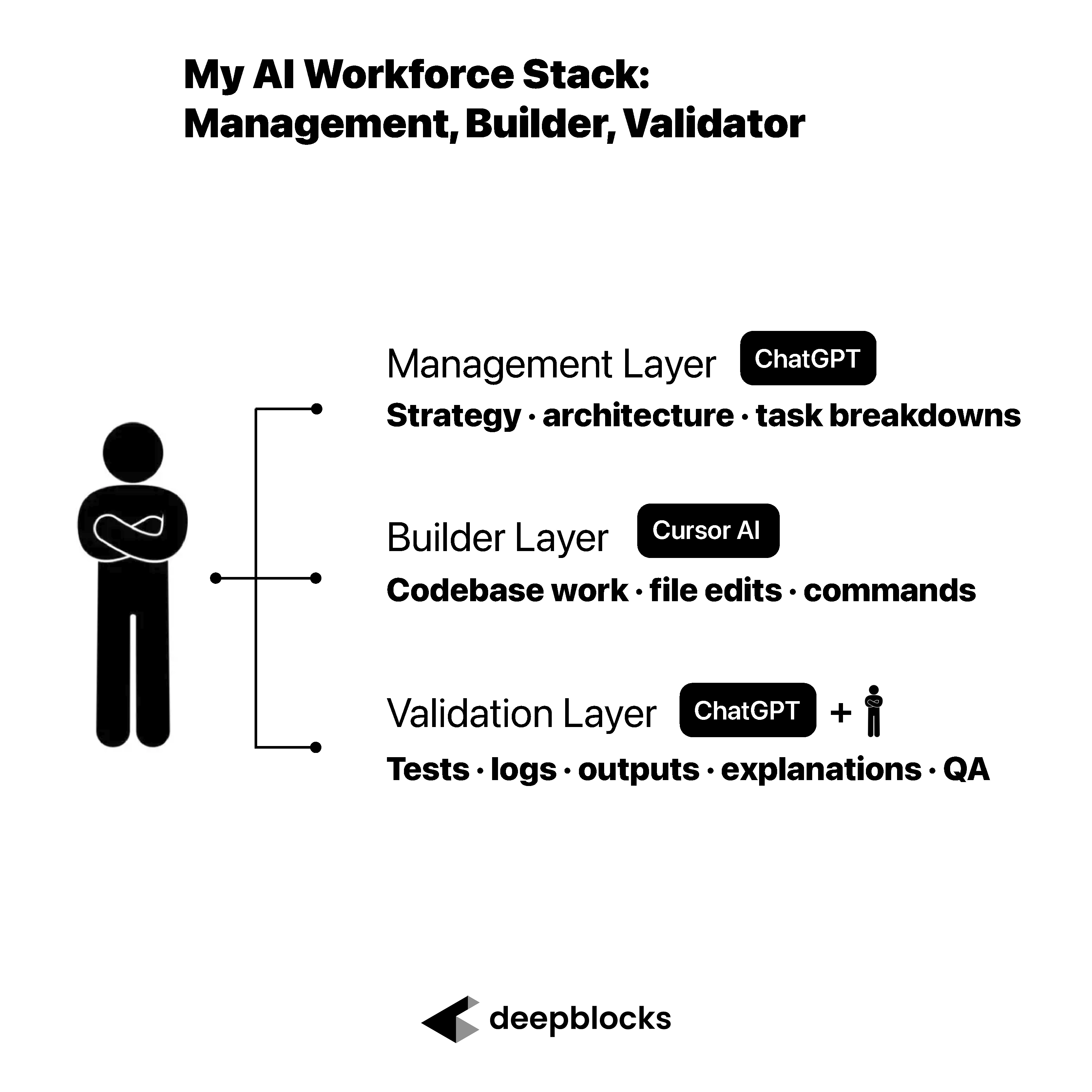

When the Code Worked, But the Meaning Was Wrong

I remember reviewing outputs from workflows and noticing that something felt off.

The results were technically running. The pipeline was not broken in the obvious sense. The code was executing. The data was flowing. The output was being generated.

But the result did not match my understanding of the market.

When we dug in, the issue was not the code itself.

The issue was meaning.

A land use classification in one county did not mean exactly what it meant in another county. The system was treating the labels as equivalent, but from a real estate perspective, they were not equivalent.

That distinction changed the quality of the output.

This is the kind of issue that AI will not automatically solve for you.

An AI system can help write the code. It can help organize the workflow. It can even help identify inconsistencies if you ask the right questions.

But the expert still has to know which questions to ask.

Market intuition told me that something was wrong before the technical issue was obvious.

That is the value of expertise.

Technically Correct Is Not the Same as Contextually Correct

One of the biggest risks in AI-assisted software development is confusing technical success with real-world success.

A workflow can run without errors.

A model can produce an answer.

A dashboard can display a result.

A pipeline can return a list of properties.

But that does not mean the output is useful.

In real estate, an output has to survive the market.

It has to survive local rules.

It has to survive financial assumptions.

It has to survive the messy reality of how deals are evaluated.

This is where market intuition becomes the quality-control layer.

For a real estate expert, that might mean knowing when a development yield feels too high, when rent assumptions are unrealistic, when zoning capacity is being overstated, or when a site that looks good in data would never work in the real world.

AI can help produce the answer.

The expert has to judge whether the answer makes sense.

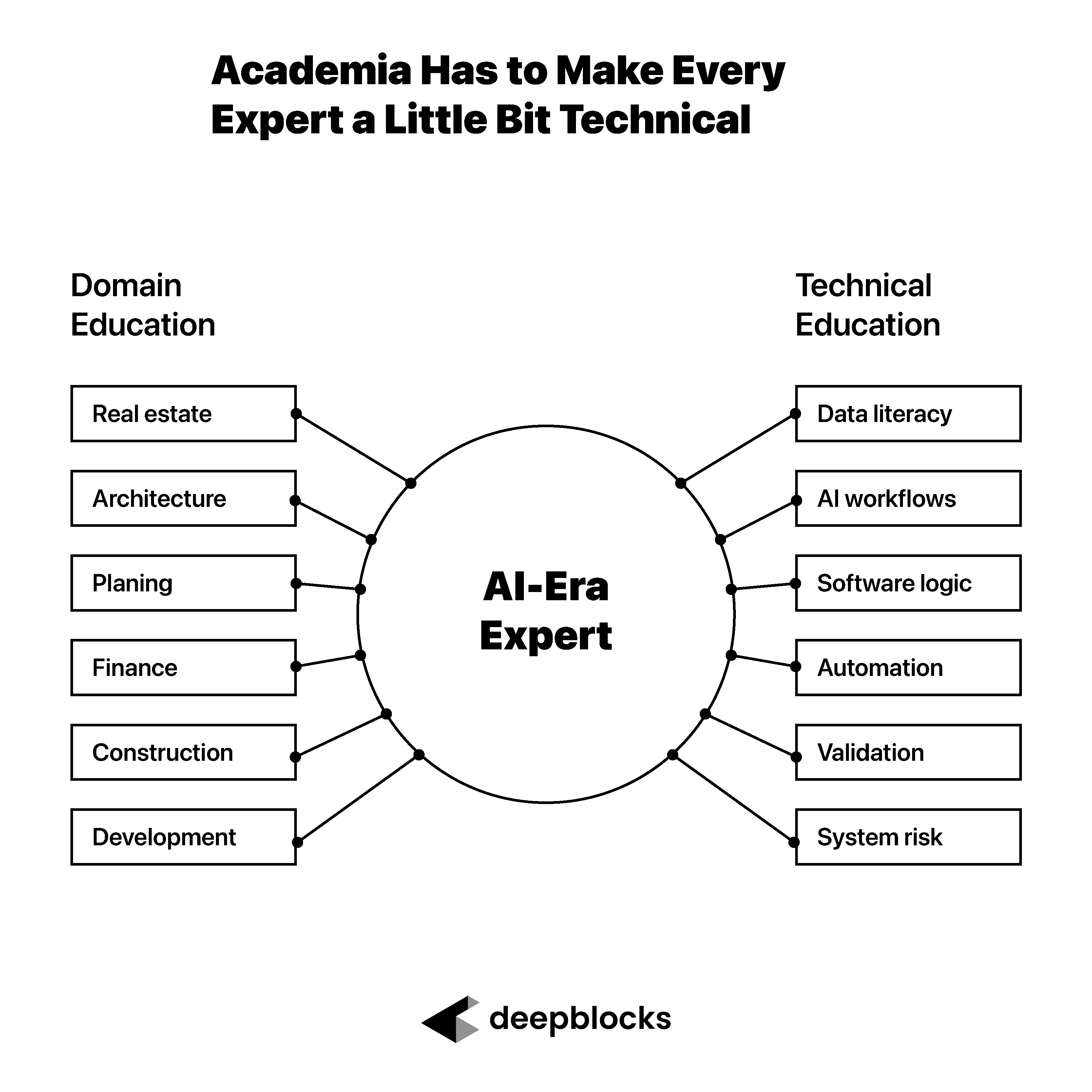

Why Experts Should Get Involved in Building

This is why experts should get involved in building AI tools.

Not because they need to become engineers.

Because their intuition is necessary to make the tool useful.

An engineer may be able to build a technically sound workflow. An AI coding tool may be able to generate the first version. But the expert knows where the workflow can fail in practice.

The expert knows which assumption is dangerous.

The expert knows which output will be trusted.

The expert knows what the user actually needs.

The expert knows when the result is misleading.

That knowledge should not sit outside the build process. It should be part of the build process.

AI gives experts a new way to participate earlier.

They can document the workflow.

They can prototype the logic.

They can review the outputs.

They can identify where the system is wrong.

They can improve the tool before a large engineering investment is made.

That is a very different role from simply handing requirements to a technical team.

How Real Estate Experts Can Start Building With AI

For experts who want to start building with AI, the first step is not to learn programming syntax.

The first step is to document a workflow you already understand deeply.

Choose a process you know well.

A lead generation process.

A site selection process.

A zoning review process.

A feasibility screen.

A market comparison.

A recurring report.

Then write down how the process actually works.

What are the inputs?

What are the steps?

What assumptions are being made?

What edge cases usually break the process?

What outputs are useful?

What outputs are misleading?

How would you know if the result is wrong?

That last question is critical.

If you cannot describe what a bad output looks like, you cannot reliably manage an AI workflow.

The goal is not to become technical overnight.

The goal is to turn your market intuition into a repeatable validation process.

Market Intuition Has to Be Trained

Market intuition is not magic.

It is learned.

It comes from repetition.

It comes from reviewing outputs.

It comes from seeing mistakes.

It comes from comparing what the system says with what the market actually does.

This is why experts need to get close to the AI build process. If they stay too far away, they will not develop the new kind of intuition required to manage AI systems.

The expert will not have an engineer’s intuition at first.

But they can build a different kind of intuition.

They can learn what AI is good at.

They can learn where it is fragile.

They can learn which instructions improve the output.

They can learn where data definitions break.

They can learn how to ask better questions.

They can learn how to validate the result.

That is the path.

Not becoming an engineer.

Becoming an expert who can manage intelligent systems.

Conclusion: AI Can Build Faster, But Experts Still Know What Good Looks Like

AI can help write code.

AI can organize workflows.

AI can generate prototypes.

AI can help identify patterns.

AI can make it easier for industry experts to begin building tools that previously required a technical team.

But AI does not replace market intuition.

In real estate, the expert still has to know when a development yield feels wrong, when a data field is misleading, when a zoning assumption is too generous, when a rent assumption is unrealistic, or when a site that looks promising in a dataset would fail in practice.

That is why the first skill is not coding.

It is documentation and market intuition.

Documentation tells AI what to build.

Market intuition tells the expert whether the result is worth trusting.