From Using AI to Managing an AI Workforce

Today, I think less in terms of “using AI” and more in terms of managing an AI workforce.

That does not mean the AI is autonomous in some magical way.

It means I use different AI environments for different jobs, and my role is to manage the workflow between them.

That distinction matters.

When most people talk about using AI, they usually mean one conversation. They ask a question, get an answer, copy the output, and move on.

But when you are trying to build something more complex, one conversation is not enough.

You need a place to think through the strategy.

You need a place to build.

You need a way to validate what was built.

You need documentation.

You need continuity.

You need a process for moving instructions, outputs, questions, and corrections between systems.

That is what I mean by an AI workforce stack.

It is not one AI doing everything.

It is a managed workflow across multiple AI roles.

The Three Layers of My AI Workforce Stack

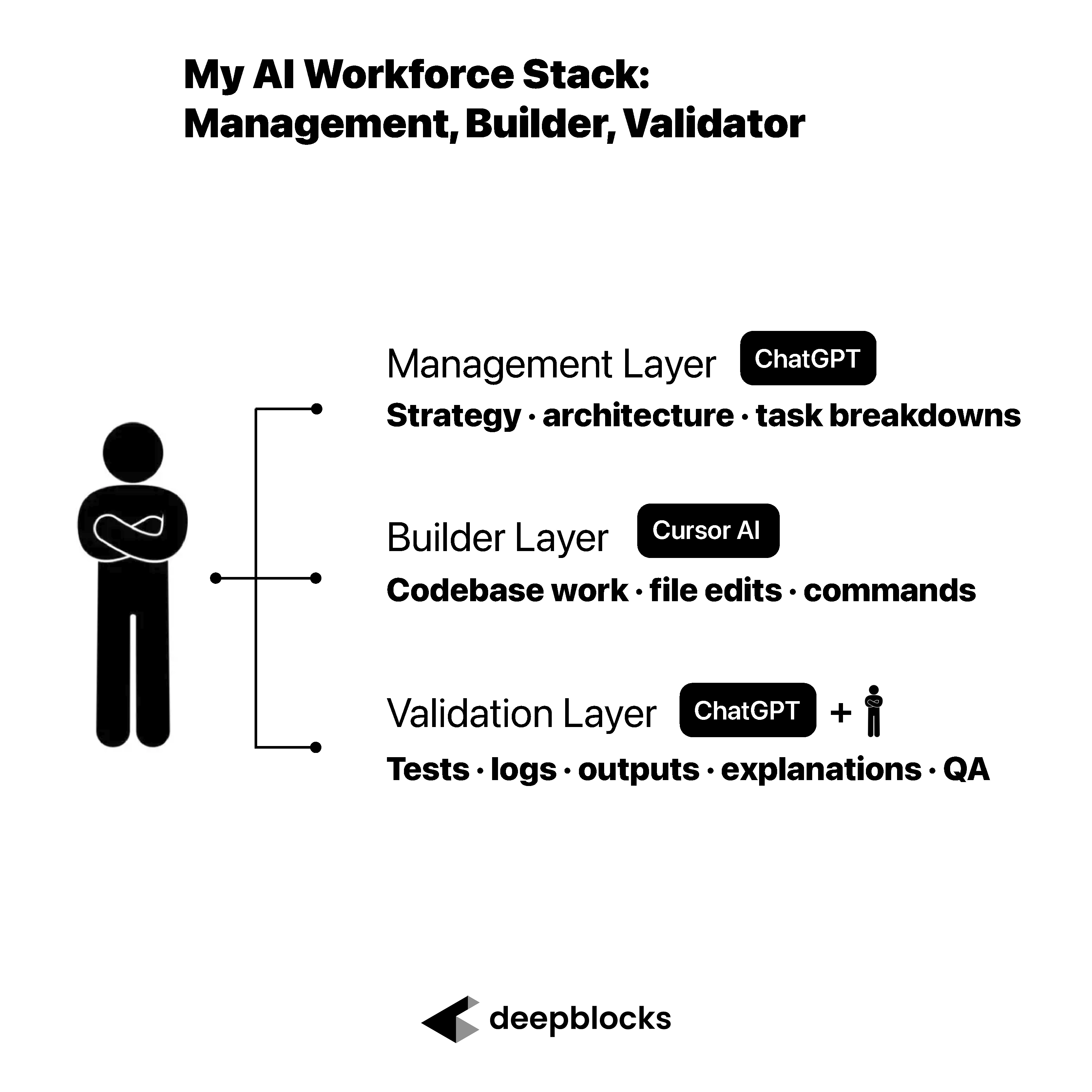

The AI workforce stack I use has three main layers.

The first is the management layer.

The second is the builder layer.

The third is the validation layer.

Each layer has a different job.

The management layer helps me think through the project, organize the work, define the architecture, break down tasks, and prepare instructions.

The builder layer executes closer to the codebase. It reads files, edits code, runs commands, and returns feedback.

The validation layer checks whether the work actually makes sense. It reviews the output, asks what changed, looks for gaps, and helps me decide whether the result solves the original problem.

The important point is that I do not treat AI as one generic tool.

I treat it more like a team.

Different tools have different jobs.

And my job is to manage the work between them.

Layer 1: The Management AI Layer

The management layer is where I think through the project.

This is where I work through strategy, software architecture, product decisions, campaign logic, task breakdowns, and implementation planning.

A tool like ChatGPT is useful here because Projects can keep long-running work organized. OpenAI describes Projects as workspaces where chats, uploaded files, and custom instructions can live together under a shared objective, which makes them useful for repeated and evolving work.

That matters when I am managing multiple workstreams at once.

For example, if I am building an AI marketing lab, I need to think through the content strategy, the email workflow, the database structure, the automation logic, the campaign tracking, and the feedback loop.

Those are not isolated tasks.

They are connected parts of one system.

The management layer gives me a place to keep the project context together and turn a large goal into smaller, actionable instructions.

Why the Management Layer Gets Exhausted

The management layer does a lot of work.

It helps me define what I want to build.

It helps me break the project into steps.

It helps me write instructions for the builder layer.

It helps me interpret technical feedback.

It helps me validate whether the work is aligned with the original goal.

That is a lot for one AI workspace.

In one recent two-month project, I ended up with more than 40 exhausted chats. That is not because the tool was failing. It is because the management layer was doing real work across strategy, architecture, implementation planning, debugging, documentation, and validation.

This is why a higher-usage plan can matter if you are doing this seriously. OpenAI describes ChatGPT Pro tiers as designed for heavier workflows, with higher usage limits than Plus and support for demanding work across parallel projects.

The lesson is not that everyone needs the most expensive AI plan.

The lesson is that serious AI-assisted building creates a lot of context.

And context has to be managed.

Layer 2: The Builder AI Layer

The second layer is the builder layer.

This is where tools like Cursor become valuable.

The builder layer operates closer to the codebase. It is not just answering questions about code from the outside. It is working inside the environment where the software is being built.

Cursor describes its product around agentic coding and “complete codebase understanding,” where the tool can learn how a codebase works across scale and complexity. Cursor’s own agent guidance describes an agent harness that includes instructions, tools such as file editing, codebase search and terminal execution, and the model selected for the task.

That is why the builder layer is different from the management layer.

The management layer helps define the work.

The builder layer implements it.

In practice, I may ask the management layer to create detailed instructions for a feature, workflow, fix, or architectural change. Then I bring those instructions into the builder layer, where Cursor can inspect the project, make changes, run commands, and report back on what happened.

This avoids the old workflow of asking a chatbot for code, copying it, pasting it into the project, and hoping I put it in the right place.

The builder AI is closer to the actual software.

That makes it much more useful.

But it also makes validation more important.

Layer 3: The Validation AI Layer

The third layer is the validation layer.

This is the most important layer.

One AI can propose the build.

Another AI can implement it.

Another AI can review it.

But I still need to understand what happened.

I still need tests, logs, outputs, and explanations.

I still need to know whether the result solves the original problem.

That is the job.

The expert does not need to write every line of code.

The expert needs to manage the work.

This is where many people misunderstand AI-assisted building. They assume the hard part is getting AI to generate the code. But in a real workflow, generation is only one part of the process.

The harder questions are:

What changed?

Why did it change?

What assumptions were made?

What could break?

How was it tested?

Does the output match the original goal?

Does the workflow solve the actual business problem?

Those are validation questions.

And without validation, AI-assisted building becomes risky very quickly.

Managing AI Is Similar to Managing a Human Team

Managing AI is closer to managing a human team than people realize.

You do not ask an engineer to build something and then disappear.

You ask questions.

You review the output.

You clarify the requirement.

You pressure-test the assumptions.

You decide whether the result is good enough.

But you also do not ask an engineer to explain every single line of code.

At some point, you trust the process.

You trust the quality-control system.

You trust that the work will be tested.

You trust that anything serious will become visible during QA.

AI-assisted work requires a similar balance.

You cannot blindly accept everything.

But you also cannot micromanage every token, every line, every function, and every decision.

The expert’s job is to understand enough to manage the direction, review the outputs, and know when to ask for more explanation.

That is the new skill.

Not coding everything yourself.

Managing the AI-assisted workflow.

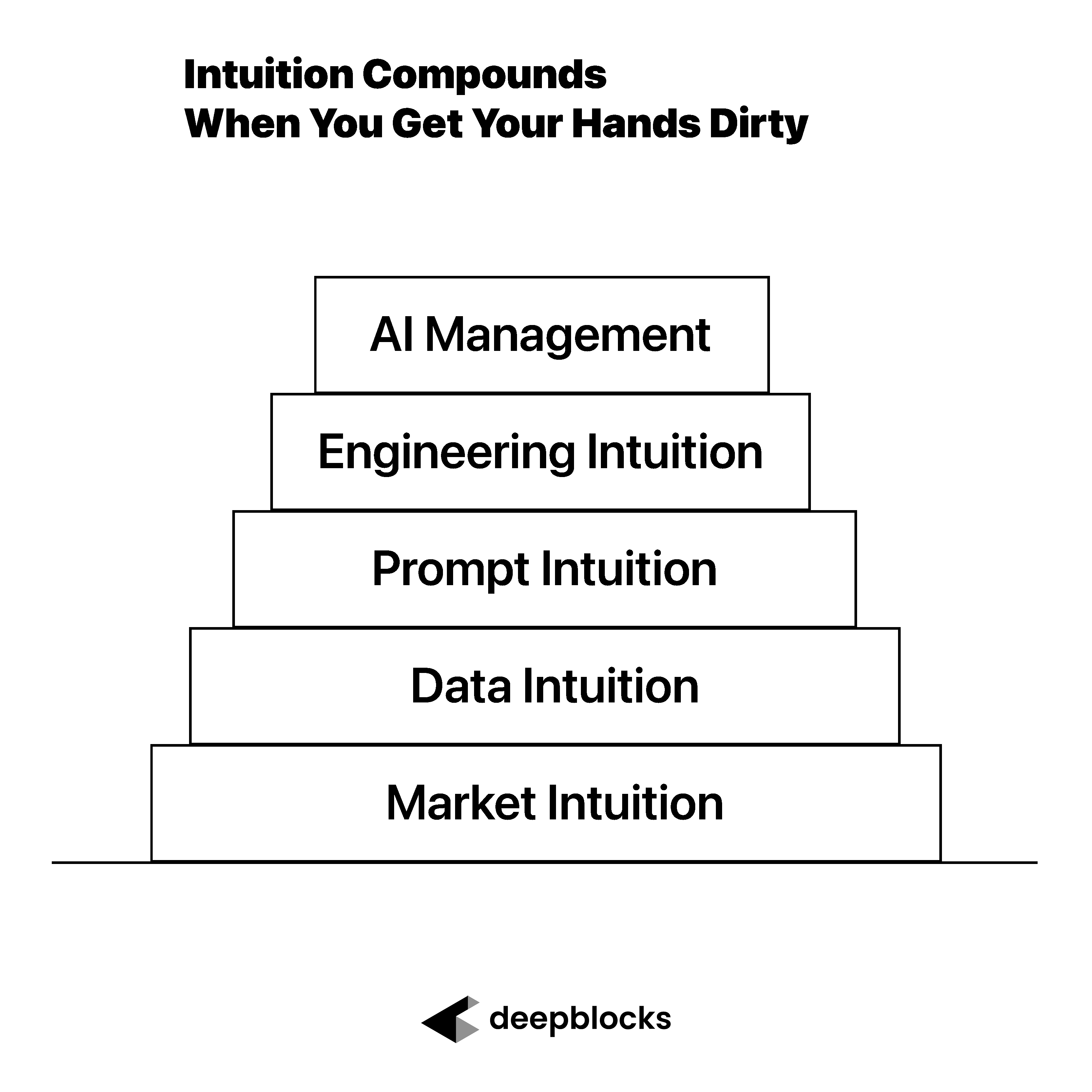

Why the Expert Still Owns the Outcome

Even with an AI workforce stack, the expert still owns the outcome.

That is the part that cannot be delegated.

The management layer can help define the plan.

The builder layer can help implement the software.

The validation layer can help review the work.

But the expert still has to decide whether the result matters.

Does this solve the right problem?

Does this match the business goal?

Does this workflow reflect how the industry actually works?

Would a user trust this output?

Is this worth improving?

Should this be automated at all?

Those questions require domain judgment.

For me, that domain is real estate.

A coding agent may be able to build a workflow. But it does not automatically know whether a real estate feasibility output is meaningful, whether a lead list is valuable, whether a market assumption is realistic, or whether the workflow is solving the right operational problem.

That is why the expert cannot leave the loop.

AI can accelerate the work.

The expert still has to direct it.

The AI Workforce Stack Is Really a Management System

The phrase “AI workforce” can sound like the point is autonomy.

But for me, the more important idea is management.

The stack only works when each layer has a clear role.

The management layer thinks, plans, and instructs.

The builder layer implements, edits, tests, and reports.

The validation layer reviews, questions, and checks alignment.

The expert moves between all three.

That is the system.

And the better the expert becomes at managing the system, the more leverage they get.

This is not about replacing a team with a chatbot.

It is about learning how to coordinate AI tools the way you would coordinate specialized contributors.

Each one needs context.

Each one needs a clear task.

Each one needs review.

Each one needs feedback.

That is where the expert’s work changes.

AI does not eliminate management.

It makes management more important.

What This Means for Real Estate Experts

For real estate experts, this is a very practical shift.

Many of us have workflows we would like to improve.

Lead generation.

Site selection.

Feasibility analysis.

Market research.

Content creation.

Email campaigns.

Reporting.

Data cleanup.

Internal dashboards.

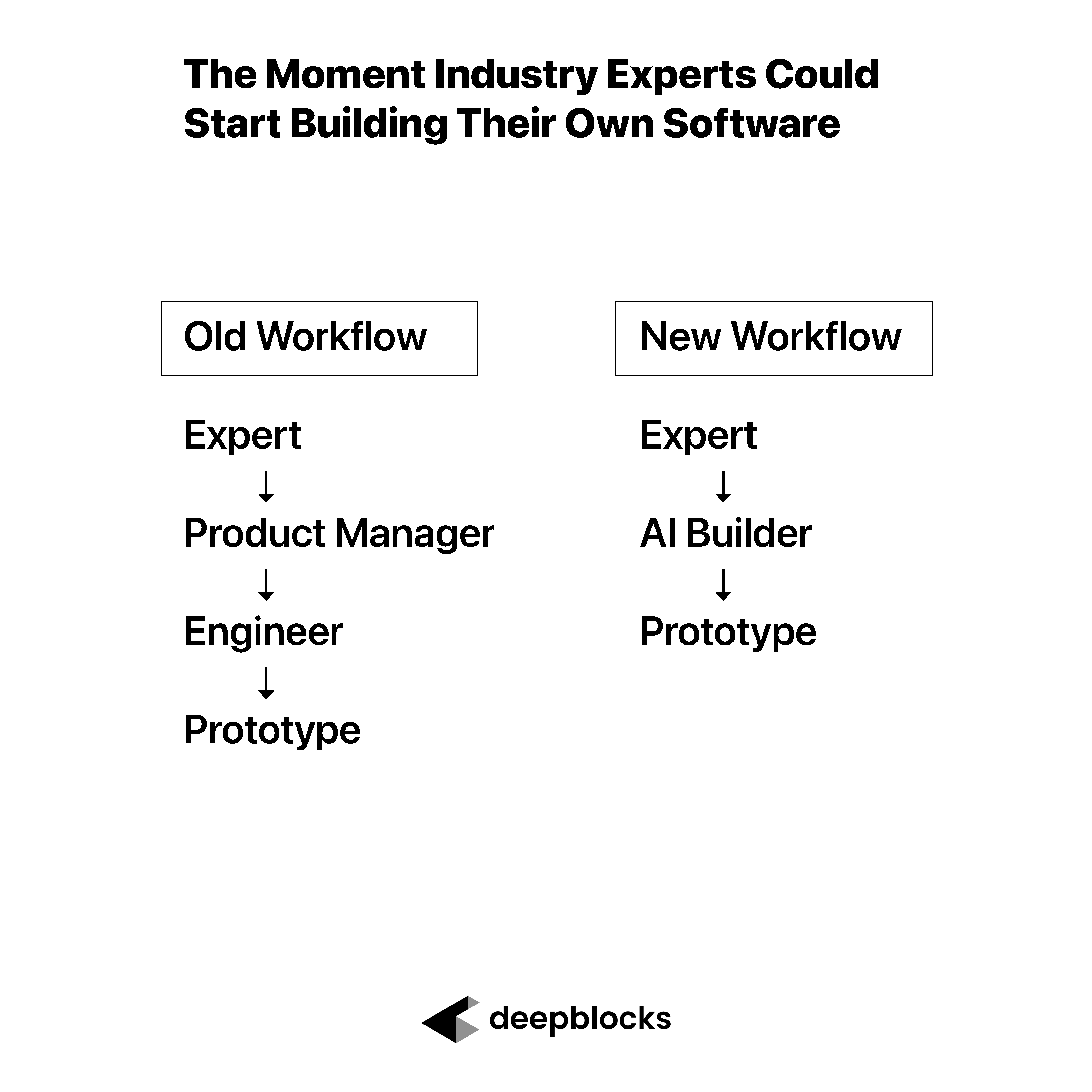

Historically, turning those workflows into software required a team, a budget, a technical roadmap, and a lot of translation.

Now, an expert can start earlier.

They can use a management AI layer to think through the workflow.

They can use a builder AI layer to create a first version.

They can use a validation layer to review whether the result works.

That does not mean the result is production-ready.

It does not mean engineers are unnecessary.

It means the expert can move from idea to prototype with much more agency than before.

That is the power of the AI workforce stack.

It gives the expert a way to begin.

Conclusion: AI Does Not Eliminate Management. It Makes Management More Important.

Today, I do not think of AI as one tool.

I think of it as a stack.

A management layer.

A builder layer.

A validation layer.

Each layer has a job, and the expert’s role is to keep the work moving between them.

That means writing better instructions.

Asking better questions.

Reviewing outputs.

Understanding what changed.

Testing the result.

Knowing when to push back.

Knowing when to trust.

And knowing whether the work actually solves the problem.

The expert does not need to write every line of code.

But the expert does need to stay responsible for context, judgment, and validation.

That is the new management discipline.

AI does not eliminate management.

It makes management more important.